A couple of weeks ago, Yann LeCun posted his definition of a “world model” as used in AI on Twitter (@ylecun). The post had more than 400 K views, was reposted around 500 times, and got more than 150 comments. One of the comments was mine in which I asked if there is a block diagram explaining how everything works together and if it resembles the attached picture of my dynamical (kihbernetic) system model. My post was viewed a mere 35 times, and had no responses.

I went through the whole thread a couple of times trying to understand the matter, and the more I read about it, the more I was frustrated with the apparent contradictions that no one seemed to be bothered with. Anyway, one of the comments I found most useful was from Erik Zamora and it contained this block diagram:

In short, LeCun’s model is an observer that encodes observations (xt) into representations (ht) which are then fed into a predictor, along with all other inputs, such as an action proposal (at), a previous estimate of the state of the world (st), and a latent variable proposal (zt) which according to LeCun “represents the unknown information that would allow us to predict exactly what happens, and parameterizes the set (or distribution) of plausible predictions.”

Both Enc() and Pred() are trainable deterministic functions e.g. neural nets, and zt must be sampled from a distribution or varied over a set.

LeCun says “The trick is to train the entire thing from observation triplets (x(t),a(t),x(t+1)) while preventing the Encoder from collapsing to a trivial solution on which it ignores the input.“

Zamora asked some very interesting questions that LeCun graciously answered as follows:

- Why s(t) and h(t) are not the same variables?

- Because s(t) may contain information about the state of the world that is not contained in the current observation x(t). s(t) may contain information accumulated over time. For example. it could be a window of past h(t), or the content of a memory updated with h(t).

- Can P(z(t)) be conditioned by actions, observations and states?

- Yes, P(z(t)) can be conditioned on h(t), a(t), and s(t).

- Why must Enc() and Pred() be deterministic?

- The cleanest way to represent non-deterministic functions is to make a deterministic function depend on a latent variable (which can come from a distribution). This parameterization makes everything much clearer and non ambiguous.

- Do we use Enc() to encode x(t+1)?

- yes, Enc() is used to encode all x(t) as soon as they are available. When training the system, we observe a sequence of x(t)s and a(t)s and train Enc() and Pred() to minimize the prediction error of h(t+1). This implies that Pred() predicts h(t+1) as part of predicting s(t+1).

- How to train P(z)?

- During training, we observe a sequence of x(t) and a(t), so we can infer the best value of z(t) that minimizes the prediction error of h(t+1). We can train a system to model the distribution of z(t) thereby obtained, possibly conditioned on h(t), a(t), and s(t).

- Alternatively, we can infer a distribution q(z) over z(t) using Bayesian inference to minimize the free energy

F(q) = \int_z q(z) E(z) + 1/b \int_z q(z) \log(q(z))

where E(z) is the prediction error of h(t+1).

This trades the average energy for the entropy of q(z).

q(z) should be chosen in a family of distributions that makes this problem tractable, e.g. Gaussian (as in variational inference for VAE and other methods).

Now, to give you an idea of my “proficiency” with AI, let’s just say that this last paragraph in LeCun’s explanation starting with “Alternatively” is way above my head. I am no software engineer and my average knowledge of math is enough to vaguely understand the mechanics of it all, but I can’t say anything about the complicated physical calculations and computational “tricks” SW engineers regularly use to do their “magic” in solving problems. However, having spent most of my career working along with SW developers, I know that sometimes they may be carried away, and try to implement “the right solution for the wrong problem”. So, it is the “mechanics” of the AI problem I want to talk about next, not the underlying “physics” and algorithms used to implement it which was the main topic of discussion in this Twitter thread that I had trouble following.

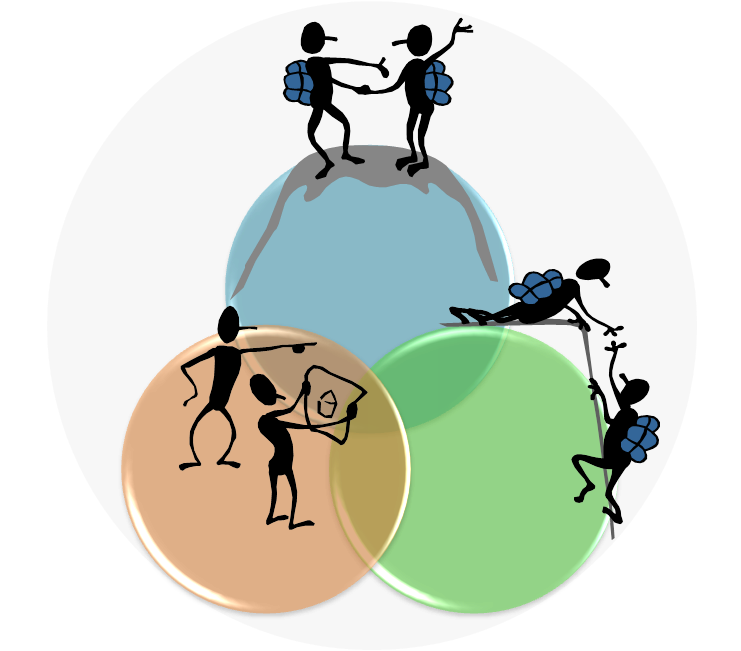

The mechanics I know best how it works is the one of control in dynamical systems. An AI is a dynamical learning system and all such systems have similar structures made with some mandatory components. Of course, the details of the underlying “algorithms” will be different for various physical substrates that the components (natural and/or artificial) are made of, and the capabilities that each one of those systems can support. The mandatory components of any dynamical (kihbernetic) system and their relationships that I identify in my Kihbernetic system model are as follows:

1️⃣ System with a unique identity. Learning systems will individually grow through a unique sequence of interactions with their immediate environment and thus have a unique history of experiences that cannot simply be duplicated into another, identical system.

2️⃣Processes – a recursive internal autopoiesis of learning and growth, and the linear allopoiesis dealing sequentially with external environmental issues such as collecting resources and getting rid of waste, tracking the state of the environment and reacting appropriately, and cooperating with other similar entities. These processes are distributed on

3️⃣Control levels – The immediate regulation of a large number of variables for maintaining the overall stability (homeostasis) of the system; The control of the regulators by tracking their performance and spreading their burden, as well as the implementation of “higher goals” by planning, and scheduling necessary regulators’ engagement, and finally the guidance level for maintaining the identity, the long term goals and character of the system.

4️⃣Variables – Input (material, energy, and data), Output (waste, action/behavior, data), Information (“the difference that makes a difference”), and the Knowledge state of the system.

5️⃣Functions – Reaction, Perception, Integration Prediction, and Control

It is these four variables and five functions that I wish to discuss in more detail related to the “World Model” proposal from above.

LeCun’s model specifies only two functions: Encoding Enc() and Prediction Pred() but as Zamora correctly identifies (and LeCun seems to agree) it should have at least another Delay function that serves as a kind of memory for preserving the previous state(s) of the model, and a Generator (P(z) in Zamora’s depiction) of the latent variable proposal or unknown information (zt). However, it is still unclear (to me) how (where) the action proposal (at) may be generated. LeCun explains the meaning of at with this example that does not really help in identifying the originator:

x(t): a glass is sitting on a table

a(t): a hand pushes the glass by 10cm

x(t+1): the glass is now 10cm away from where it was.

Obviously, at and zt are the variables I have the most “problems” with. I can understand the concept of observation (xt) and of state (st) which would correspond to the Input and Knowledge variables in my model. I can even understand the concept of internal representation (ht) of the observed data (xt), which would correspond to the arrow pointing out from the Perception function (B) in my kihbernetic model, which is the function responsible for converting (transducing) external physical perturbances of the “sensory apparatus” into internal low-level “neural states” of the observer system, which are more suitable for further neural “processing”. However, simply selecting at and zt by sampling from a distribution, or varying over some prepared set seems (to me) like a “cheap trick” for making a deterministic function look like it is not deterministic.

The variable at (the action proposal) is ambiguous. It can represent either one proposal (intention) among many, of the observer internally running a simulation to identify the best option for a future action. This proposal(s) affect (refine) only the observer’s knowledge of the world in the form of the state st unlike the final proposal selected for a real action (experiment) that may change things in the real world, and the consequences of which may become known only after making the next observation xt.

The variable zt which as LeCuns says “represents the unknown information that would allow us to predict exactly what happens” is exactly that: information. The only thing is that, by definition, all information is unknown. If it was known it would be knowledge rather than information. In the kihbernetic model, we use knowledge to extract information from data. Two different observer systems (or even the same system at different points in time) will extract different information from the same set of data, depending on their current knowledge state.

To better explain my point of view for the appropriate representation of an “AI world model” I produced the following mapping of LeCun’s world model onto my kihbernetic system model. LeCun’s Pred() function combines my Perception and Prediction while Enc() maps to my Control & Reaction:

Pred() and Enc() are still deterministic algorithmic functions but their original meaning is somehow different in my interpretation. The thing that introduces a degree of uncertainty is the (also deterministic) memory function on the top which can range from a simple delay function to a more complex integrator maintaining the knowledge state of the whole system.

The Enc() (encoding) function instead of just doing the trivial transformation of external observations (measurements) (xt) into internal representations (ht) is now encoding the final action proposal (at) selected as the most appropriate (best) response to the input (prompt) that will induce (or not) some reaction in the real world that may be witnessed in some subsequent input (xt). Obviously, the real world acts here as the “reward system”, which is how it should be if we want our AI tools to align with our values. The internal variables st and at coming out of the “memory function” represent the knowledge state of the system which is fed to the other two functions as a parameter that changes their “behavior” so that, depending on the state they are in, they may produce a different (better) output for the same input, which is, if you think about it, the definition of learning.

The Pred() (prediction) function is the central tenet of every learning system. To learn, the system must have some expectations about the future generated from the knowledge state discussed above. An important part of this state vector is also the preliminary action proposals fed to the prediction function as “what if” inputs for internal “simulation” and refinement of possible real-world scenarios before selecting the one that will actually be encoded. This internal autopoietic (learning) loop “runs” with a frequency that is an order of magnitude higher than the external action loop through the environment, and the main principle is the minimization of the information variables (zt or st+1) that represent the difference between the expected state (predicted by the world model simulation) and the actual (observed) one.

So, to conclude let’s describe the proposed “mechanics” of all of this. An observation, input, or prompt is available as input to the system where it is encoded into an action response output based on the current knowledge state of the system. If the input describes a situation in the real world that is already known to the system because it dealt with it in the past that’s all that is needed for a proper response. The system can detect this is a known problem because the predictions it made in the previous step correspond to the observed input in this step, so the output can be encoded with the existing knowledge.

If the response delivered into the real world is not appropriate for the current situation, the world will respond differently than expected and the observation will not match the prediction, so there will be a difference between the two (information) that will have to be integrated with the existing knowledge into a new (upgraded) knowledge state that will hopefully allow for a better prediction and response. The dynamic of this internal learning results cycle does not depend on the dynamic of the external “reward” loop. The system is free to learn at any pace with an arbitrary precision of how predictions should match observations. The system can also test multiple “what if” scenarios within these learning cycles and even generate test action proposals to experiment with different scenarios in the real world.

In the end, I want to draw attention to the work of researchers like Albert Gu using structured state space models (SSM) as the base for developing AI agents (see for example here). This work seems to me very promising with lots of potential and similar to the ideas I’ve presented in this post.